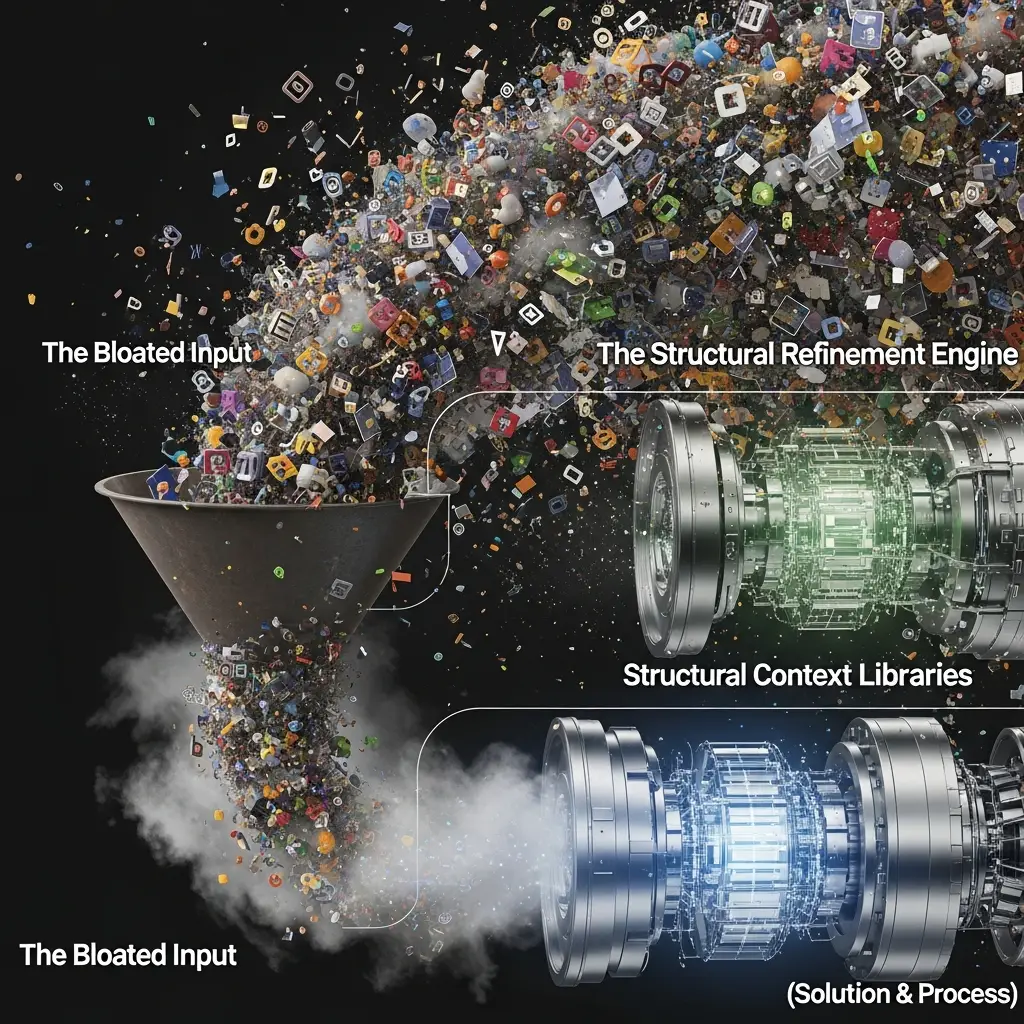

Your AI models are starting to feel like an overstuffed suitcase, bursting at the seams with data, yet still missing the crucial piece of information. Every extra token feels like a wasted dollar, a missed opportunity to deliver that *exact* message to the *exact* person. We’ve all seen it: the endless battle against “token bloat” while trying to achieve truly meaningful hyper-personalization.

Efficient AI Scaling for Hyper-Personalization

For too long, the conversation around scaling AI for personalized outreach has been dominated by the idea of “more is better.” More data, more prompts, more tokens – all in the hope of generating something that *might* resonate. This approach is akin to trying to hit a bullseye by firing a shotgun blast; you might hit *something*, but you’re guaranteed to waste a lot of ammo (and, in this case, cash).

Scaling AI Productivity: Hyper-Personalization Without Token Bloat

Think about it like this: instead of feeding your AI a whole library every time you want it to write a personalized email, what if you provided it with a meticulously curated index and a set of highly specific instructions? This is the essence of “scaling AI productivity for hyper-personalization without token bloat.” We’re not talking about fancier algorithms or more powerful hardware. We’re talking about smarter input management, akin to how a master craftsman doesn’t just grab any tool; they select the *exact* tool for the *exact* job.

Input-First Design: Scalable AI for Hyper-Personalization

The goal here is to move away from “brittle automation” – those AI outputs that feel generic despite your best efforts – and towards robust, scalable personalization. This requires treating your input structure as a first-class citizen of your AI workflow, not an afterthought. When you design your prompts and your data libraries with an understanding of how the AI will process them, you start to see significant gains in efficiency.

Intelligent Scaling: Hyper-Personalization Beyond Token Bloat

This shift in perspective – from brute-force data feeding to intelligent structural management – is how you can truly scale AI productivity for hyper-personalization without token bloat. It’s about building an AI system that’s not just intelligent, but also incredibly efficient, freeing up your time and resources to focus on what you do best, while your AI works smarter, not just harder, to connect with your audience. By adopting these structural, disciplined approaches, you’re not just cutting costs; you’re enhancing the signal-to-noise ratio of your AI’s output. You’re ensuring that every token spent delivers maximum value, creating genuine connections and driving meaningful engagement. This is the future of hyper-personalization for solopreneurs and freelancers: intelligent, efficient, and built on a solid, scalable foundation.

For More Check Out