Alright, let’s cut through the noise. You’ve probably heard the million-qubit fairy tale. The one where we all wake up in 2035 with fault-tolerant quantum computers effortlessly cracking RSA. Let me tell you, the quantum folks I actually talk to in the trenches aren’t waiting.

Navigating the NISQ Era: Beyond Theoretical Topological Quantum Error Correction

They’re looking at the NISQ hardware we have *today* and asking, “How do we get business value out of this mess *now*?” We’re not talking theoretical **topological quantum error correction** for some distant future; we’re deep in the weeds of error mitigation, wringing actual utility out of noisy qubits, and frankly, the textbooks are way behind. My team and I have been treating this like an engineering problem, not a theoretical physics exercise.

Topological Quantum Error Correction: Engineering Around Imperfect Qubits

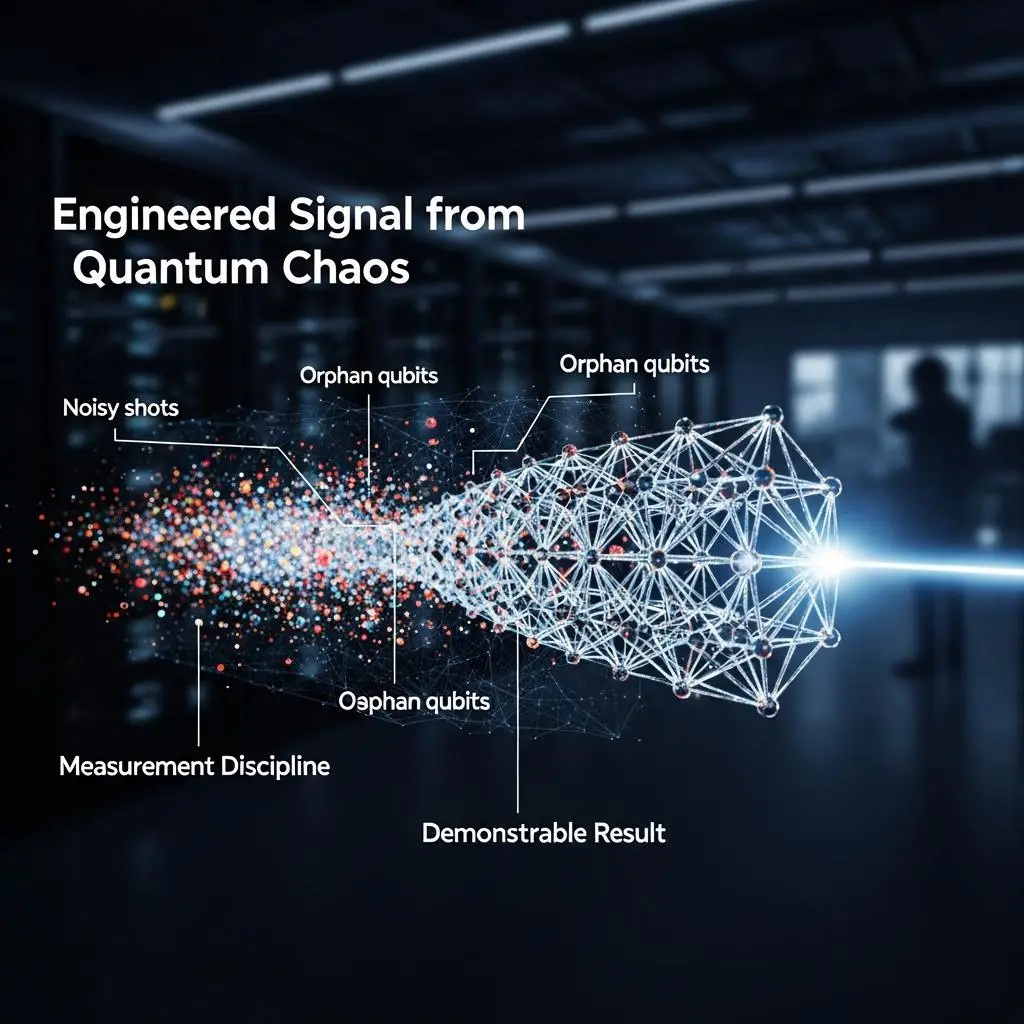

Instead of waiting for the “perfect” logical qubit, we’ve built a programming stack that acknowledges the reality of today’s hardware: the noise, the flaky qubits, the sheer annoyance of measurement latency. The goal? To push NISQ machines into regimes that, by all conventional estimates, should require a fully fault-tolerant future. We’re not trying to *perfect* the hardware; we’re trying to *engineer around* its imperfections to get a demonstrable result.

Topological Quantum Error Correction: Navigating the NISQ Era

Consider the core of our approach: a three-layer system we call the H.O.T. Framework (Hardware-Optimized Techniques). We’re not running half-adder simulations. We’re targeting cryptographically relevant problems. By mapping Shor-style period finding onto our noise-robust, recursively-geometric gate patterns, and then feeding that output into our V5 measurement discipline, we’re achieving results that, by standard resource estimates, are simply not feasible on today’s hardware. We’ve seen 21-qubit ECDLP key recoveries on IBM’s Fez backend, achieving a rank 535/1038 in a 14-bit ECDLP.

Leveraging Topological Quantum Error Correction: Signal from the Noise

The takeaway for you, if you’re looking to push these boundaries: stop treating noise as something to be purely corrected away. Treat it as an input. Understand the specific fingerprint of your backend. Design your circuits not for the ideal machine, but for the machine you have. If you’re seeing noise, you’re likely seeing *signal*—you just need the right methodology to extract it. The true bottleneck isn’t necessarily gate count, but measurement latency and readout fidelity, and that’s where we’re finding our leverage today. The future isn’t a million qubits; it’s a smarter way to use the thousands we have.

For More Check Out