Alright, let’s cut through the noise. Look, everyone’s still obsessing over the million-qubit, fault-tolerant machine that’s… well, let’s be honest, it’s sliding into the late 2030s. Meanwhile, we’re in the trenches, wrestling with actual NISQ hardware, and what we’re seeing is that the real win isn’t about building bigger error correction codes; it’s about something far more fundamental, and frankly, much harder to market.

Measurement Hygiene: The NISQ Hardware Bottleneck

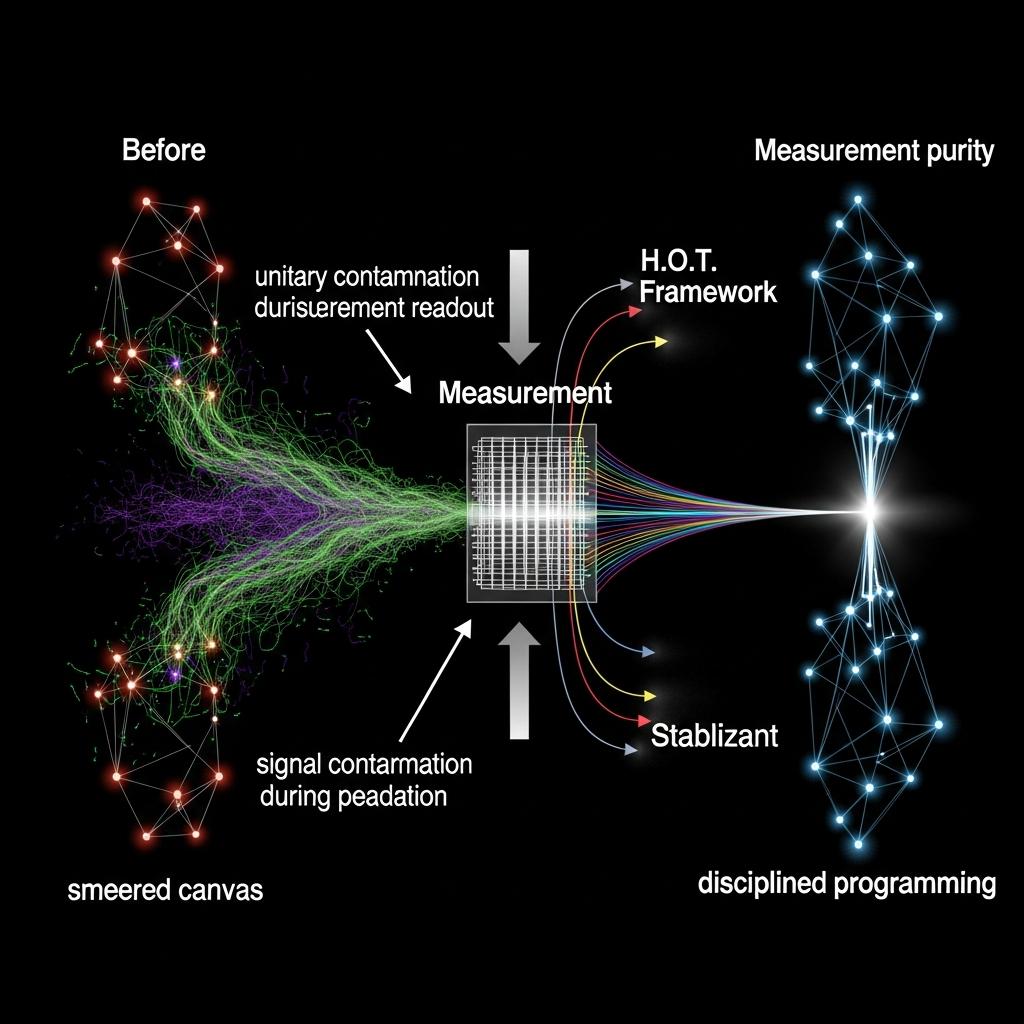

It’s about measurement hygiene. You hear the chatter about complex QEC, but the truth is, if your readout isn’t cleaner than a freshly wiped terminal, all those fancy logical qubits are just feeding you noise. Forget the grand pronouncements about error rates; the bottleneck, the real enemy, is the signal contamination during measurement. Think about it. You spend days calibrating your qubits, tuning gates to the nanosecond, only to have it all crumble at the finish line because your measurement process is… well, leaky.

Measurement Hygiene: The NISQ Hardware Bottleneck

We’re talking about Unitary Contamination, where those half-collapsed, borderline “poison qubits” drag down the entire outcome. On a V5-scale backend, the sheer latency of readout means this isn’t a minor inconvenience; it’s The Bottleneck. A bad measurement shot can pollute your entire ensemble, making complex algorithms look like random walks. So, what do we do? We stop treating measurement as an afterthought.

Measurement Hygiene: Optimizing NISQ Hardware Readout

We build it into the core of our programming, not as a patch, but as a feature. We call it H.O.T. Framework (Hardware-Optimized Techniques) – a layered approach where the top layer is all about disciplined readout. For us, noise IS signal in a very literal sense: the anomalous patterns in your measurement outcomes are the fingerprints of your backend’s unique contamination profile. This isn’t just about discarding bad shots.

Measurement Hygiene: The NISQ Hardware Bottleneck

It’s about designing circuits that expose these issues, and then using that knowledge to filter. We’ve been running experiments, like those 21-qubit ECDLP recoveries on IBM Fez (Job ID: `fez-20240515-143255-231765`), where the success hinges on aggressively excluding shots exhibiting outlier qubit statistics. We’re not looking for “good” qubits; we’re identifying “less contaminated” islands of connectivity and ensuring our computation lives within them, or routes around the poison. If your measurement fidelity is only, say, 90%, and you’re running a circuit where 10% of your qubits are functionally “poison” by the time you read them out, you’re essentially doomed. Real utility, right now, comes from understanding and controlling that last mile—the measurement readout. Before you get lost in the schematics of the next-gen monolithic quantum computer, ask yourself: is your current measurement process a clean slate, or a smeared canvas? Because if it’s the latter, no amount of theoretical QEC is going to save your results. It’s time to get serious about measurement hygiene.

For More Check Out