Alright, let’s talk about what’s *really* killing your deep NISQ circuits. You’ve dialed in your calibrations, you’ve spent days chasing down stray coherence times, and then BAM – your carefully crafted algorithm turns into a pile of statistical noise right at the readout. We all blame the gates, the crosstalk, the usual suspects. But what if I told you there’s a more insidious enemy lurking?

Unraveling Unitary Contamination in Deep NISQ Circuits

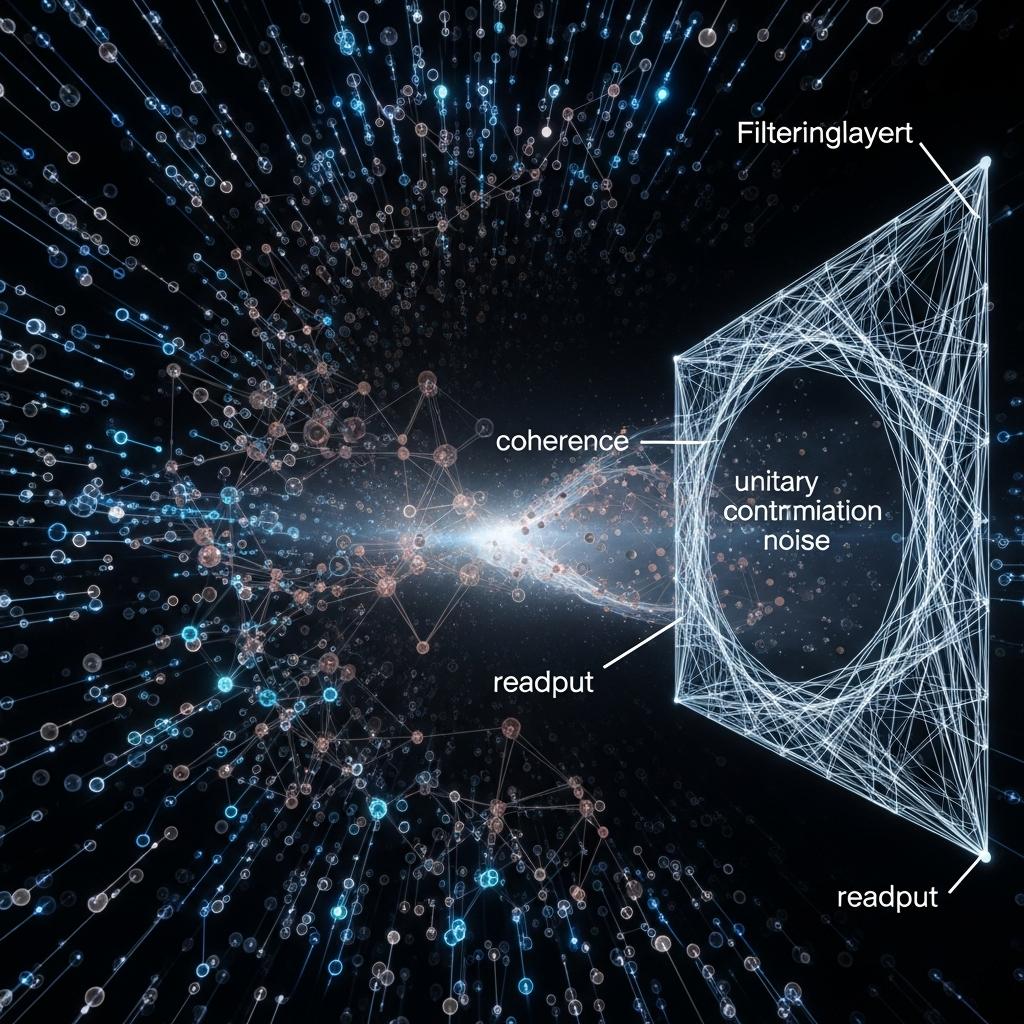

I’m talking about **unitary contamination** in deep NISQ circuits. It’s this sneaky coherence killer that slips past your standard error mitigation, the one that doesn’t show up neatly in your gate fidelity reports. Think of it as a qubit that’s partially collapsed, a phantom state that’s just alive enough to taint the entire quantum register during measurement. It’s poisoning your results *before* you even get a clean readout, and your fancy error correction might be looking right past it because it’s not the kind of error it’s designed to catch.

Deep NISQ Unitary Contamination Thresholds

The effective coherence of your computation degrades not just from individual qubit decoherence ($T_1$, $T_2$) or gate errors, but from the *crosstalk during readout* of semi-collapsed, or “poison,” qubits. When the ratio of these contaminated qubits to your active, usable qubits exceeds a certain threshold – and we’re seeing it hover around **10%** – the entire system’s unitary evolution becomes irrevocably compromised. It’s not just about discarding bad shots; it’s about recognizing that the *potential* for contamination fundamentally alters the accessible computational space, even before a measurement is finalized.

Mitigating Unitary Contamination in Deep NISQ Circuits

This implies a new benchmark for circuit viability: not just qubit count or gate fidelity alone, but a metric that factors in the “poison qubit ratio” and the latency of exclusion. We’ve been implementing what we call the **H.O.T. Framework** (Hardware-Optimized Techniques), and a core component is what we’re calling “V5 orphan measurement exclusion.” It’s a disciplined measurement and post-selection layer.

Active Unitary Contamination Management for Deep NISQ Circuits

The takeaway for you, the academic rebel and boundary-pushing programmer, is this: stop treating measurement as a passive output. Treat it as an active participant in your error mitigation strategy. Start thinking about how **unitary contamination** and the “poison qubit ratio” are silently capping your NISQ circuit performance, especially in deep computations. The hardware is speaking to you through its noise profile; the challenge is learning to interpret it. Consider this a testable hypothesis: implement robust orphan measurement exclusion and noise-aware circuit design. Benchmark ECDLP instances, or other deep circuits, on your chosen backends. Track your “poison qubit ratio” and correlate it with the success rate of your key recovery or computation. You might find that the true bottleneck for deep NISQ circuits isn’t the number of gates you can execute, but how well you can shield your computation from the lingering ghosts of measurement. The door to useful quantum computation is already open, if you know where to look and how to filter.

For More Check Out